Predictive Analytics Models and Algorithms: A Complete Guide to Smarter Business Decisions

Predictive analytics is no longer a niche capability. It has become a core business function for organizations competing in a data-driven economy. Companies are moving beyond reporting past performance and are becoming more focused on predicting future outcomes and acting on them in real time using advanced predictive analytics models.

According to Gartner, by 2026, organizations that successfully operationalize AI and data analytics will significantly outperform their peers in decision-making speed and business outcomes. This highlights how predictive analytics is becoming central to enterprise strategy and competitive advantage.

From predicting customer churn and optimizing pricing to forecasting demand and detecting fraud, predictive analytics plays a key role across industries. To realize its full potential, it is essential to understand not just the models and algorithms, but also how to choose, apply, and scale them effectively. Organizations that invest in the right data analytics consulting services are more likely to unlock meaningful insights along with achieving measurable impact.

The blog covers the key predictive analytics models and algorithms that drive smarter business decisions, along with their real-world applications.

Predictive analytics models are mathematical and statistical frameworks designed to project future outcomes based on historical data. These models examine patterns, relationships, and trends in data to estimate what is likely to happen next.

Unlike traditional analytics, predictive analytics models are dynamic. They continuously learn from new data and adapt their predictions accordingly. This ability to evolve makes them highly valuable in situations when conditions change frequently, such as financial markets, customer behavior, and supply chain network operations.

However, the effectiveness of a predictive model depends on three critical factors:

Without these, even the most advanced model can produce misleading results.

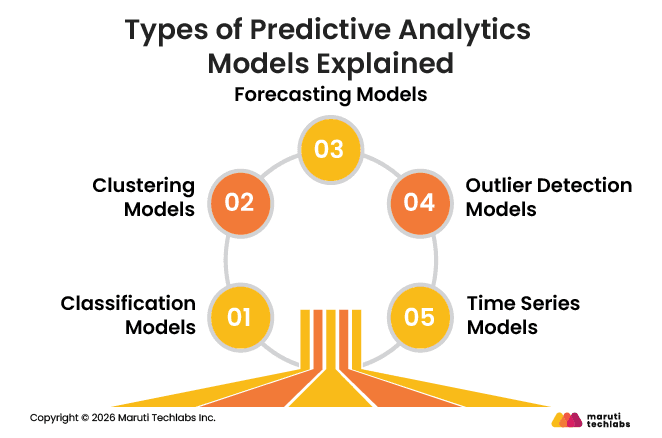

Predictive analytics models can be largely categorized by the type of problem they solve, such as classification, segmentation, forecasting, anomaly detection, and time-series trend analysis. Many of the latest predictive analytics models 2026 combine these approaches to deliver more accurate and scalable results across complex business environments.

Classification models are used when the output is categorical. They determine which class a data point belongs to. For example, a bank may use classification to decide whether a transaction is fraudulent or legitimate.

These models work by learning decision thresholds from historical data. While they are highly effective for binary decisions, they can struggle with imbalanced or poorly defined classes.

Clustering models group similar data points lacking predefined labels. They are commonly used in customer segmentation, where businesses identify groups of users with similar behaviors.

The strength of clustering lies in disclosing hidden patterns. However, results may differ considerably depending on how clusters are defined and the quality of input data.

Forecasting models estimate numerical values based on historical trends. These models consider multiple variables such as seasonality, demand changes, and external factors.

They are widely used in financial planning, inventory management, and sales forecasting. Their effectiveness depends heavily on the stability of historical patterns.

Outlier models focus on spotting anomalies in data. Such irregularities often indicate fraud, system failures, or unusual behavior.

Their value lies in early detection. However, distinguishing between genuine anomalies and normal variations can be challenging.

Time series models examine data collected over time to detect trends and patterns. These models are vital when time is a key factor, such as predicting stock prices or demand cycles.

They work well with structured, sequential data but could struggle with sudden interruptions or non-linear changes.

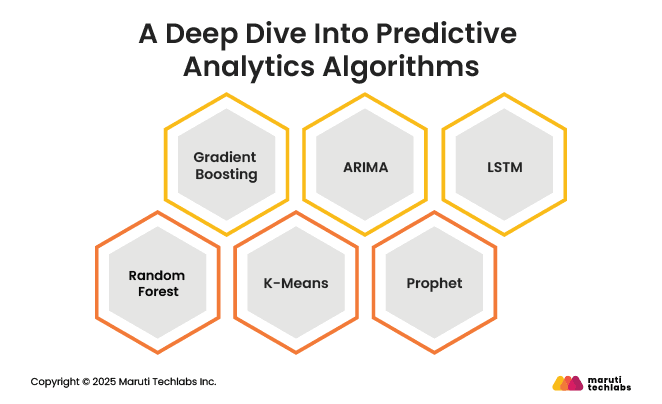

Predictive analytics algorithms form the foundation of how models learn from data and generate accurate predictions. In practice, they rely on classification, regression, and clustering methods, which reflect the role of machine learning in predictive analytics

Understanding how they work, along with their pros and cons, is essential for selecting the right approach.

Random Forest is a powerful ensemble learning algorithm that boosts prediction accuracy by combining multiple decision trees instead of relying on a single model. The idea is simple. A single decision tree can easily overfit the data, but a collection of trees trained on different subsets of data can produce more stable predictions.

| How It Works | When To Use | When Not To Use |

| Uses a technique called bagging, where multiple models are trained independently, and their predictions are averaged. |

|

|

Gradient Boosting is another ensemble technique, but unlike Random Forest, it builds models sequentially. Each new model focuses on correcting the mistakes of the previous ones, making it highly effective at achieving high predictive accuracy.

| How It Works | When To Use | When Not To Use |

| Minimizes prediction errors by focusing on difficult-to-predict data points in each iteration. |

|

|

K-Means is an unsupervised learning algorithm used to discover patterns in data by assembling similar data points into clusters. It is widely used for segmentation problems where labels are not available.

| How It Works | When To Use | When Not To Use |

| Assigns data points to clusters by minimizing the distance between them and cluster centroids. |

|

|

ARIMA, which stands for Autoregressive Integrated Moving Average, is a classical statistical method used for time-series forecasting. It is notably effective for datasets wherein historical patterns and trends are strong indicators of future behavior.

| How It Works | When To Use | When Not To Use |

| Combines autoregression, differencing, and moving averages to model time-dependent data. |

|

|

Prophet is a forecasting model developed by Meta to make time-series analysis more accessible to business users. It is designed to handle common problems such as missing data, seasonality, and trend changes with minimal manual tuning.

| How It Works | When To Use | When Not To Use |

| Decomposes time series into trend, seasonality, and holiday effects. |

|

|

LSTM, or Long Short-Term Memory, is a type of recurrent neural network designed to handle sequential and time-dependent data. It is particularly useful when long-term dependencies throughout the data need to be captured.

| How It Works | When To Use | When Not To Use |

| Uses memory cells to retain long-term dependencies in data sequences. |

|

|

Sl No | Algorithm | Accuracy | Interpretability | Speed |

| 1. | Random Forest | High | Medium | Medium |

| 2. | Gradient Boosting | Very High | Low | Slow |

| 3. | K-Means | Medium | High | Fast |

| 4. | ARIMA | Medium | High | Fast |

| 5. | Prophet | Medium | High | Fast |

| 6. | LSTM | Very High | Low | Slow |

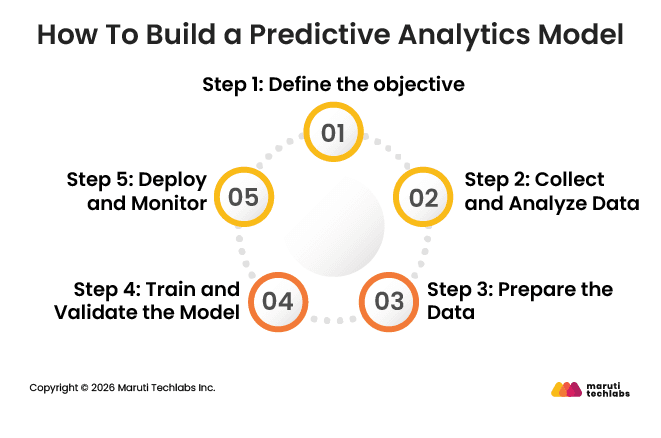

Building a predictive analytics model constitutes a structured process that goes beyond simply selecting an algorithm. It requires aligning business goals with data, steadily updating the model, and making sure it delivers measurable value in real-life situations.

Start by clearly identifying the business problem and the outcome you want to predict. This could be reducing customer churn, forecasting sales, or detecting fraud.

A well-defined objective ensures the model is aligned with measurable KPIs and business impact rather than simply technical performance.

Gather data from multiple sources such as CRM systems, transactional databases, APIs, and third-party platforms. Analyze this data for identifying patterns, inconsistencies, and gaps that may affect model effectiveness.

For example, in retail, combining purchase history with seasonal trends might significantly improve demand predictions.

Clean, transform, and structure the data to make it usable for modeling. This includes managing missing values, removing duplicates, normalizing variables, and engineering relevant features.

In real-world situations, data preparation often takes the most time but has the greatest effect on model effectiveness.

Select the appropriate algorithm for your objective and train it on historical data. Validate the model by adopting methods like cross-validation and test datasets to ensure it performs well in real-life conditions.

For instance, a financial institution may test fraud detection models against past transaction data to evaluate accuracy.

Integrate the model into business workflows, such as embedding it into dashboards, applications, or automated solutions. Continuously monitor its performance to detect model drift and ensure predictions stay accurate over time. Periodic updates and retraining are essential as business conditions and data patterns evolve.

Use cases of predictive analytics span across industries, helping organizations enhance decision-making, reduce risks, and uncover fresh growth opportunities. These predictive analytics model examples show how businesses apply data-based insights in real-life situations.

Insurance providers use predictive analytics in insurance to improve risk assessment, pricing methods, and policy design. Instead of relying only on traditional actuarial methods, insurers now evaluate large volumes of customer data, including demographics, behavioral patterns, and historical claims.

By comparing policyholders with similar profiles, predictive models estimate the likelihood of future claims with better accuracy. This allows insurers to set more accurate premiums, construct tailored coverage plans, and reduce exposure to high-risk policies.

Retailers and consumer packaged goods companies use predictive analytics to improve marketing effectiveness, customer engagement, and inventory planning. Through analyzing past campaign performance, customer behavior, and purchase patterns, businesses are able to identify what strategies drive the highest returns.

Predictive models help forecast the success of future promotions, enabling firms to allocate budgets more efficiently. This reduces wasted spend and improves marketing ROI.

In financial services, predictive analytics plays a key role in risk management, fraud prevention, and investment decision-making. Financial institutions use predictive models for assessing loan default probabilities through analyzing credit history, transaction behaviors, along with macroeconomic indicators.

This enables lenders to maintain a balanced portfolio while decreasing risk exposure. At the same time, predictive analytics strengthens fraud prevention systems by spotting anomalies in real-time transactions, enabling institutions to act quickly on suspicious activity.

Predictive analytics in healthcare plays a key role in boosting patient care, reducing costs, and streamlining resource assignment. By analyzing historical patient data, including medical history, demographics, and previous admissions, predictive models can identify individuals at risk of complications or readmissions.

This enables healthcare workers to take preventive measures, design personalized treatment plans, and intervene quickly when risks are identified. As a result, clinical outcomes improve and operational efficiency increases, especially when leveraging strategies such as hyper-personalization and predictive analytics.

Predictive analytics has evolved from a supporting capability to a key necessity for modern businesses. By using the right models and algorithms, organizations can move beyond reactive decision-making and proactively shape outcomes across operations, customer experience, and risk management.

However, success with predictive analytics is not simply about choosing advanced algorithms. It needs a clear understanding of business objectives, high-quality data, and continuous model optimization. Organizations intending to operationalize predictive analytics can leverage AI strategy & readiness services to align data initiatives with business goals.

As data continues to grow in volume and complexity, the latest predictive analytics models 2026 will play an even more key role in helping businesses stay competitive, agile, and future-ready.

With extensive experience in building data-driven solutions, Maruti Techlabs helps organizations design, develop, and deploy predictive analytics models aligned with specific business goals. From data engineering and model selection to deployment and continuous optimization, the team delivers end-to-end support across the analytics lifecycle.

What makes Maruti Techlabs apart is its ability to combine resilient data engineering with custom AI development services. This enables businesses to go beyond basic analysis and build intelligent models that continuously learn, adapt, and improve forecasting precision.

A leading automotive parts manufacturer faced issues in accurately forecasting product demand, resulting in inefficiencies in inventory and supply chain planning. Maruti Techlabs addressed this by implementing an LSTM-based predictive model to examine historical sales data and identify demand patterns.

Impact:

The right predictive analytics model depends on your business objective and data type. There are several predictive analytics model examples, including classification, clustering, and forecasting models, each fitted to different use cases. In practice, organizations often combine multiple predictive analytics models to improve accuracy and insights.

Forecasting models are used for numerical predictions like sales, and time series models are best when trends and seasonality matter. In practice, businesses often combine multiple models to improve accuracy.

Yes, organizations frequently combine multiple predictive models to achieve better results. For example, a retail business may use clustering to segment customers and forecasting models to predict demand for each segment. This approach detects different data patterns and improves overall decision-making.

Every model comes with trade-offs. Complex models like ensemble models and deep learning yield higher accuracy but are harder to interpret and require more resources.

Simpler models are easier to understand but may not perform well on complicated datasets. The right balance depends on business priorities such as speed, accuracy, and explainability.

Model interpretability helps businesses understand how predictions are made, which is necessary for compliance and trust. In areas such as finance and healthcare, being able to explain decisions is frequently required. Even though advanced models may be more accurate, simpler models are often preferred when transparency is required.

Transfer learning is a technique where a model trained on one problem is reused for a similar task. It cuts training time and the need for big datasets. This approach is especially useful in situations where data is limited or expensive to collect.

Ensembling combines multiple models to improve prediction accuracy and stability. Instead of relying on a single model, it aggregates outputs from several models to reduce errors. Common techniques include bagging, boosting, and stacking.

Ensemble models are best used when accuracy and reliability are critical, such as in fraud detection, risk analysis, and recommendation systems. They perform well in complex scenarios but may require more computational resources and can be harder to interpret.

Both ARIMA and Prophet are effective for sales forecasting, but the choice depends on the data. ARIMA works well for stable datasets with consistent trends and requires more manual tuning. Prophet is easier to use, handles seasonality and missing data better, and is more suitable for business forecasting with fluctuating patterns.

Simpler algorithms like linear regression, decision trees, and logistic regression work best for small datasets. These models require less data to train effectively and are less prone to overfitting than complex models such as deep learning or ensemble methods.

In predictive and prescriptive analytics models, controllable variables are the inputs a business can directly adjust to influence outcomes, such as pricing, marketing spend, discounts, inventory levels, and production capacity. Predictive models use these variables along with uncontrollable factors like market trends or seasonality to forecast what is likely to happen, without changing them.